Animal detection and Action Recognition Using Histograms of Oriented Gradients and dense Optical Flow

Authors

Christoffersen, Henrik Møss ; Sørensen, Thomas Balling

Term

4. term

Publication year

2011

Submitted on

2011-05-31

Pages

106

Abstract

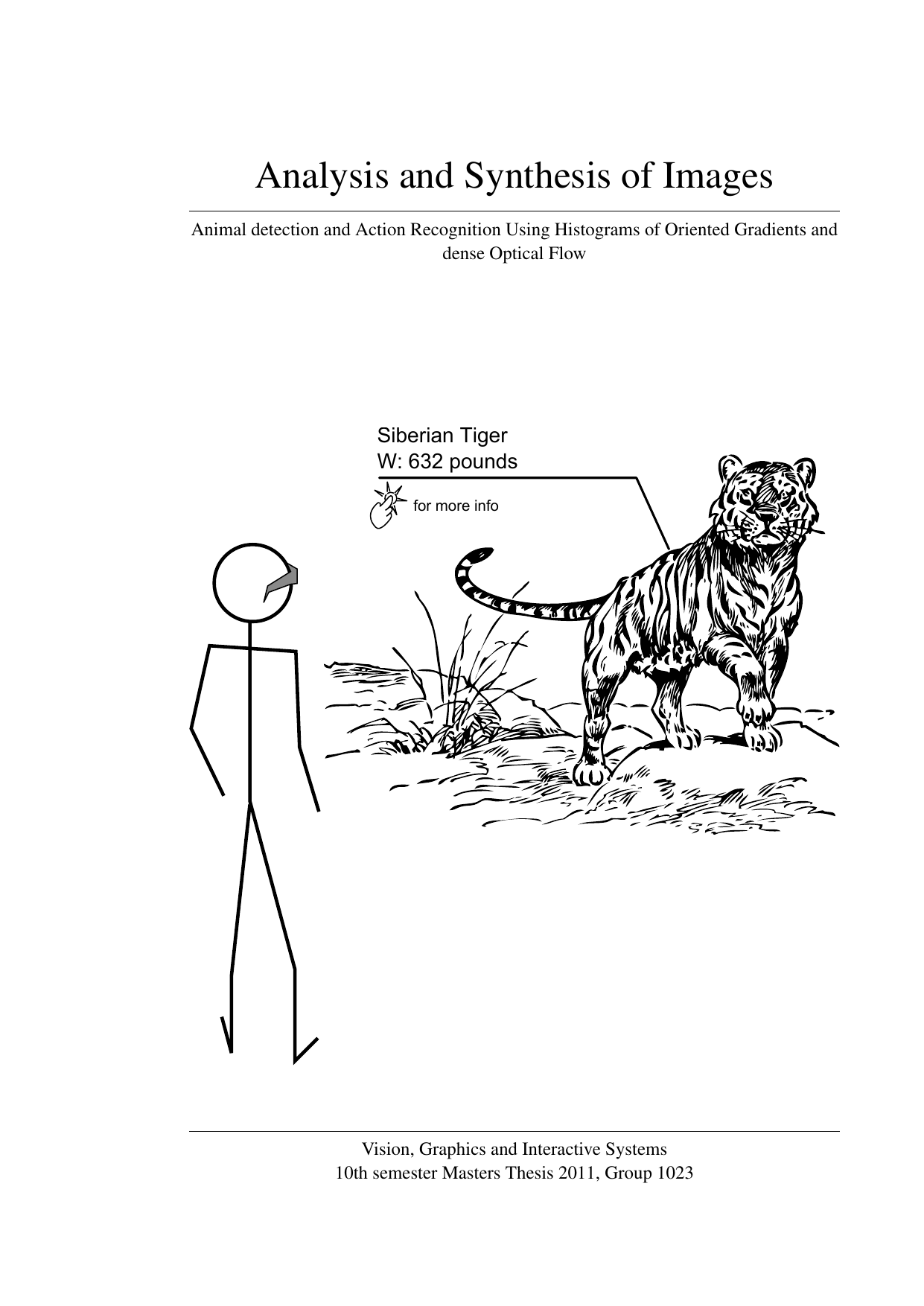

This thesis explores how to enhance the visitor experience in a zoological park by augmenting information directly into the user’s view using a camera-equipped head-mounted display. The project develops a two-stage classification system that cascades SVMs, combining Histograms of Oriented Gradients (HOG) for appearance-based detection and recognition of specific animals with dense optical flow to build multi-frame motion maps for action classification. Optical flow is computed on the GPU, and a simple compensation reduces the influence of camera motion on the motion frames. Each stage is verified independently: the system performs well at detecting elephants and recognizing two elephant actions in a small dataset. To assess generalization, action recognition is also evaluated on the KTH dataset with six actions, where results are less convincing and indicate the need for further improvement. The work shows the potential of combining HOG and dense optical flow for animal detection and action recognition in an augmented reality setting, while highlighting the importance of larger datasets and improved robustness.

Denne afhandling undersøger, hvordan man kan forbedre besøgsoplevelsen i en zoologisk park ved at augmentere information direkte i brugerens synsfelt via et hovedmonteret display med kameraer. Projektet udvikler et totrins klassifikationssystem, hvor et kaskaderet SVM-setup kombinerer Histogrammer af Orienterede Gradienter (HOG) til at detektere og genkende specifikke dyr ud fra udseende med tæt optisk flow til at udlede bevægelseskort over flere billeder og klassificere den observerede handling. Optisk flow beregnes GPU-accelereret, og en simpel kompensation reducerer effekten af kamerabevægelser på bevægelsesrammerne. Systemets trin verificeres hver for sig: Det opnår gode resultater for detektion af elefanter og genkendelse af to elefant-handlinger i et begrænset datasæt. For at vurdere generalisering testes handlingsgenkendelsen også på KTH-datasættet med seks handlinger, hvor resultaterne er mindre overbevisende og peger på behov for forbedringer. Arbejdet demonstrerer potentialet i at kombinere HOG og tæt optisk flow til dyredetektion og handlingsgenkendelse i en augmented reality-kontekst, men understreger også betydningen af mere omfattende data og yderligere robusthedstiltag.

[This apstract has been generated with the help of AI directly from the project full text]

Keywords