Training an EMG-Based Machine Learning Model to Classify Hand Gestures in a Spatial Virtual Reality Environment

Authors

Olsen, Tóki Lava ; Johansen, Charlotte ; Sørensen, Kristian Hedegaard

Term

4. term

Education

Publication year

2025

Submitted on

2025-05-27

Pages

14

Abstract

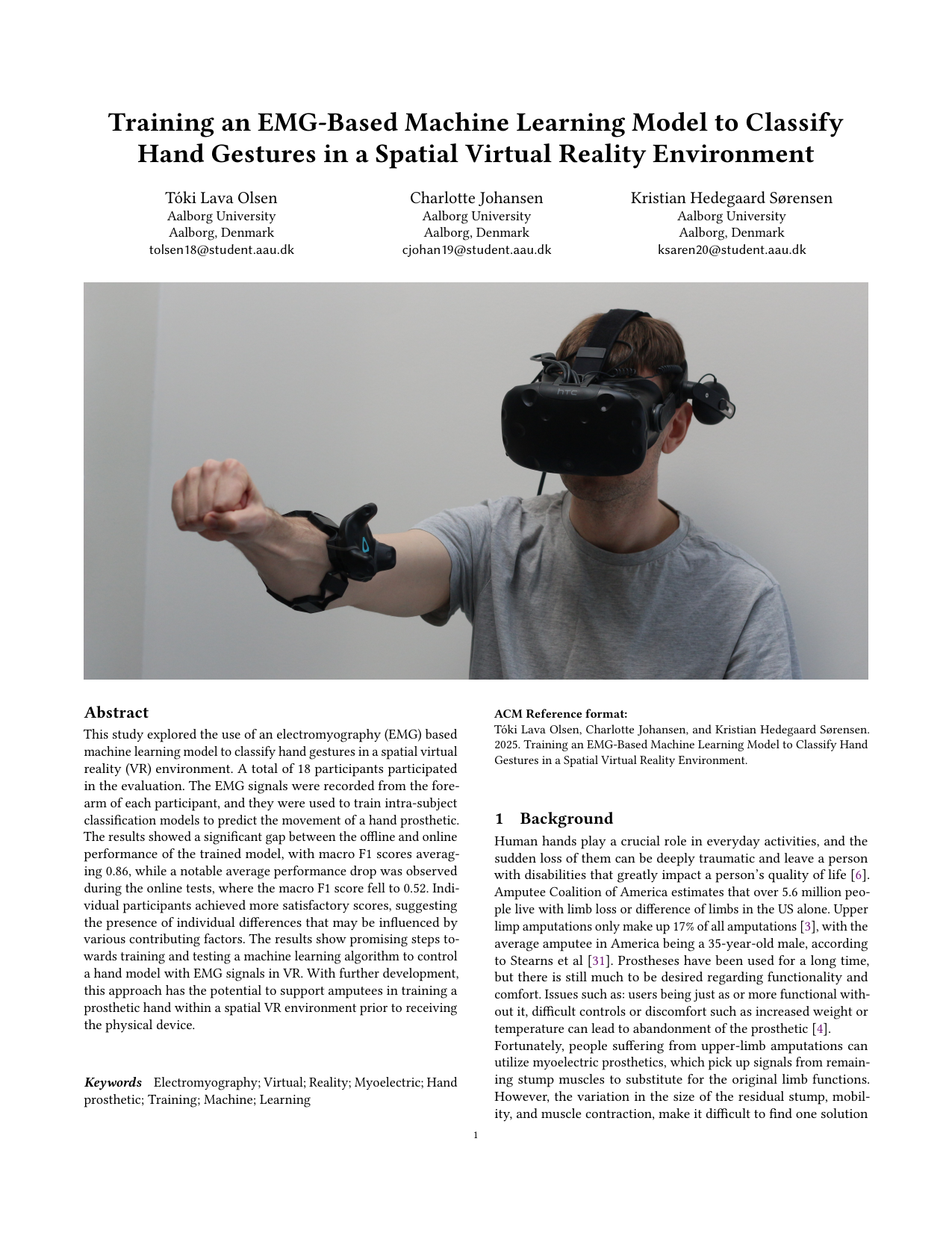

This study tested whether a machine learning model based on electromyography (EMG) can recognize hand gestures in a spatial virtual reality (VR) environment. EMG captures the electrical signals produced by muscles; here, signals were recorded from the forearm. Eighteen participants took part, and an individual (intra-subject) classification model was trained for each person to predict movements for controlling a hand prosthesis. The model performed strongly in offline tests using pre-recorded data (average macro F1 score of 0.86), but its performance dropped in real-time online tests (macro F1 score of 0.52). Macro F1 is a standard classification metric that balances correct and missed/false detections across gesture classes; higher is better (maximum 1.0). Some individuals achieved better-than-average scores, indicating person-to-person differences influenced by various factors. Overall, the results mark promising steps toward training and evaluating algorithms to control a hand model with EMG in VR, with potential to help amputees practice prosthetic hand control in a spatial VR setting before receiving the physical device.

Denne undersøgelse afprøvede, om en maskinlæringsmodel baseret på elektromyografi (EMG) kan genkende håndbevægelser i en rumlig virtual reality (VR)-omgivelse. EMG måler de elektriske signaler fra musklerne; her blev signaler optaget fra deltagernes underarme. 18 personer deltog, og for hver deltager blev der trænet en individuel (intra-subject) klassifikationsmodel til at forudsige bevægelser, som kunne styre en håndprotese. Modellen præsterede højt i offline-tests med forudindspillede data (gennemsnitlig macro F1-score på 0,86), men ydelsen faldt i online forsøg i realtid (macro F1-score på 0,52). Macro F1 er et standardmål for klassifikation, der balancerer korrekte og manglende/fejlagtige identifikationer på tværs af klasser; højere er bedre (maksimum 1,0). Nogle enkeltpersoner opnåede bedre resultater end gennemsnittet, hvilket peger på individuelle forskelle, der kan påvirkes af flere faktorer. Samlet set er resultaterne lovende skridt mod at træne og afprøve algoritmer til at styre en håndmodel med EMG i VR, med potentiale til at hjælpe personer med amputation med at øve styring af en protese i en rumlig VR-oplevelse, før de modtager den fysiske enhed.

[This apstract has been rewritten with the help of AI based on the project's original abstract]

Keywords