Smart Vision-Guided Robotic Depalletizing System

Author

Hjorth, Christian Aleksander

Term

4. semester

Education

Publication year

2025

Abstract

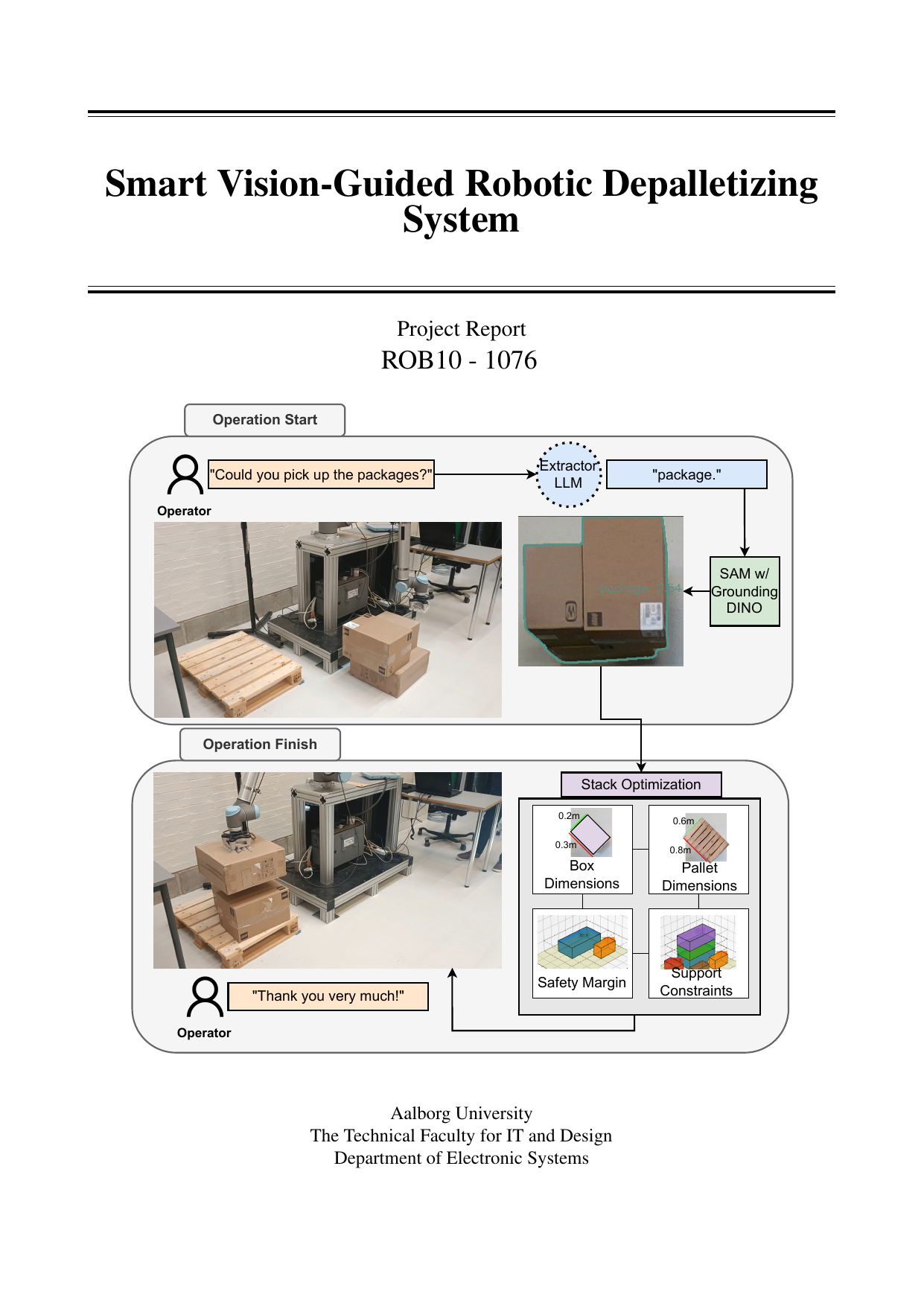

This thesis presents the design and implementation of a flexible, vision-guided robotic system for depalletizing and palletizing in dynamic logistics settings. It addresses common limitations of existing solutions that depend on known box sizes, predefined placements, or extensive training. The core research question is whether combining RGB-D perception with language-driven, promptable vision can enable generalizable object handling and intelligent stacking without prior instance-specific training. Built in ROS 2 with MoveIt2, the system integrates a UR10 robot, a VG10 vacuum gripper, and an Intel RealSense D455 camera. A dual-LLM pipeline parses natural-language operator commands to extract the target object label, which then prompts GroundingDINO and SAM to segment items directly from RGB images. Using depth data, cuboid pose and top-face dimensions are estimated (via RANSAC) to plan grasps, with grasp quality monitored via gripper pressure. Retrieved boxes are placed using constraint-based stacking optimization that accounts for pallet dimensions, support constraints, and safety margins. Real-world testing indicates reliable perception, grasping, and placement, supporting the approach’s feasibility. Key contributions include integrating LLMs for natural-language interaction, promptable segmentation for adaptable object identification, and constrained optimization for stable, space-efficient stacking. Identified improvement areas include camera configuration, pose estimation for visually similar objects, and refined prompting.

Denne afhandling præsenterer design og implementering af et fleksibelt, visionsstyret robotsystem til depalletizering og palletizering i dynamiske logistikmiljøer. Den adresserer en udbredt begrænsning i eksisterende løsninger, som ofte kræver kendte kassestørrelser, foruddefinerede placeringer eller omfattende træning. Det centrale forskningsspørgsmål er, om en kombination af RGB-D-perception og sprogstyret, promptbar vision kan muliggøre generaliserbar objekthåndtering og intelligent stabling uden forudgående træning på specifikke emner. Systemet er opbygget i ROS 2 med MoveIt2 og integrerer en UR10-robot, en VG10-vakuumgriber og et Intel RealSense D455-kamera. En dobbelt-LLM-pipeline tolker naturlige operatørkommandoer for at udtrække målobjektets betegnelse, som derefter bruges til at prompt’e GroundingDINO og SAM til segmentering direkte fra RGB-billeder. Med dybdedata estimeres kuboids pose og topfladens dimensioner (via RANSAC), hvilket muliggør gribestrategi; gribekvalitet overvåges via gribertryk. Hentede kasser placeres med begrænsningsbaseret stablingsoptimering, der tager højde for palledimensioner, støttekrav og sikkerhedsmarginer. Tests i det virkelige miljø viser pålidelig perception, gribning og placering, hvilket understøtter metodens anvendelighed. Centrale bidrag er integrationen af LLM’er til naturlig sproginteraktion, promptbar segmentering til fleksibel objektsidentifikation samt begrænsningsoptimering for stabil og pladsbesparende stabling. Forbedringsmuligheder omfatter kamerakonfiguration, mere præcis poseestimering af visuelt ens objekter og finjusterede prompts.

[This apstract has been generated with the help of AI directly from the project full text]