Own-Voice Retrieval for Hearing Assistive Devices: A Combined DNN-Beamforming Approach

Author

Garde, Julius

Term

4. semester

Education

Publication year

2019

Submitted on

2019-06-07

Abstract

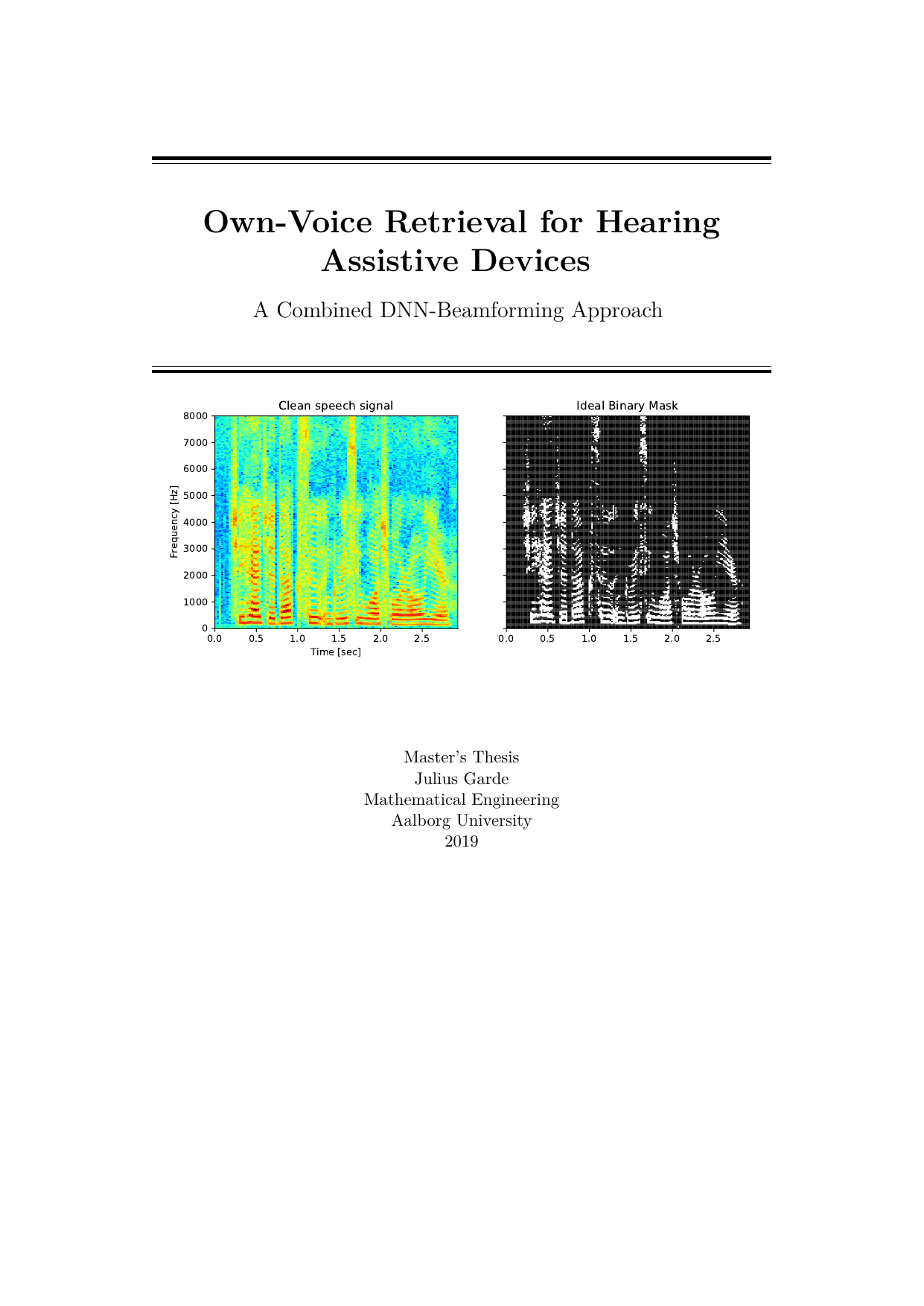

This thesis presents a system that highlights a wearer’s own voice in recordings with background noise. The system is designed with embedded devices in mind, especially hearing assistive devices such as hearing aids. We split the audio into small time‑frequency units (tiny slices of sound across time and frequency) and use a convolutional neural network (CNN) to classify each slice as dominated by noise or by the user’s own voice. Using these labels, we construct two types of beamformers—MVDR (Minimum Variance Distortionless Response) and MWF (Multichannel Wiener Filter)—methods that combine signals from multiple microphones to emphasize speech and suppress noise. Our results show that the MVDR beamformer can provide considerable improvements in perceived quality and intelligibility for selected noise types, while speech‑shaped noise and babble noise (many voices talking at once) remain challenging.

Dette speciale præsenterer et system, der fremhæver brugerens egen stemme i optagelser med baggrundsstøj. Systemet er tænkt til indlejrede enheder, især høreapparater og andre høreteknologiske hjælpemidler. Vi deler lydsignalet op i små tids‑frekvens‑enheder (små udsnit af lyden fordelt over tid og frekvens) og bruger et konvolutionsneuralnetværk (CNN) til at klassificere, om hvert udsnit mest indeholder støj eller den talendes egen stemme. Med disse klassifikationer konstruerer vi to typer beamformere – MVDR (Minimum Variance Distortionless Response) og MWF (Multichannel Wiener Filter) – metoder, der kombinerer signaler fra flere mikrofoner for at fremhæve tale og undertrykke støj. Vores resultater viser, at MVDR‑beamformeren kan give mærkbare forbedringer i den oplevede lydkvalitet og taleforståelighed for udvalgte støjtyper, mens taleformet støj og babble‑støj (mange mennesker, der taler på én gang) fortsat er en udfordring.

[This apstract has been rewritten with the help of AI based on the project's original abstract]