Automatic detection and severity assessment of stringing defect in FDM 3D printing using deep learning

Author

Herman, Damian Dariusz

Term

4. semester

Publication year

2024

Submitted on

2024-05-31

Pages

73

Abstract

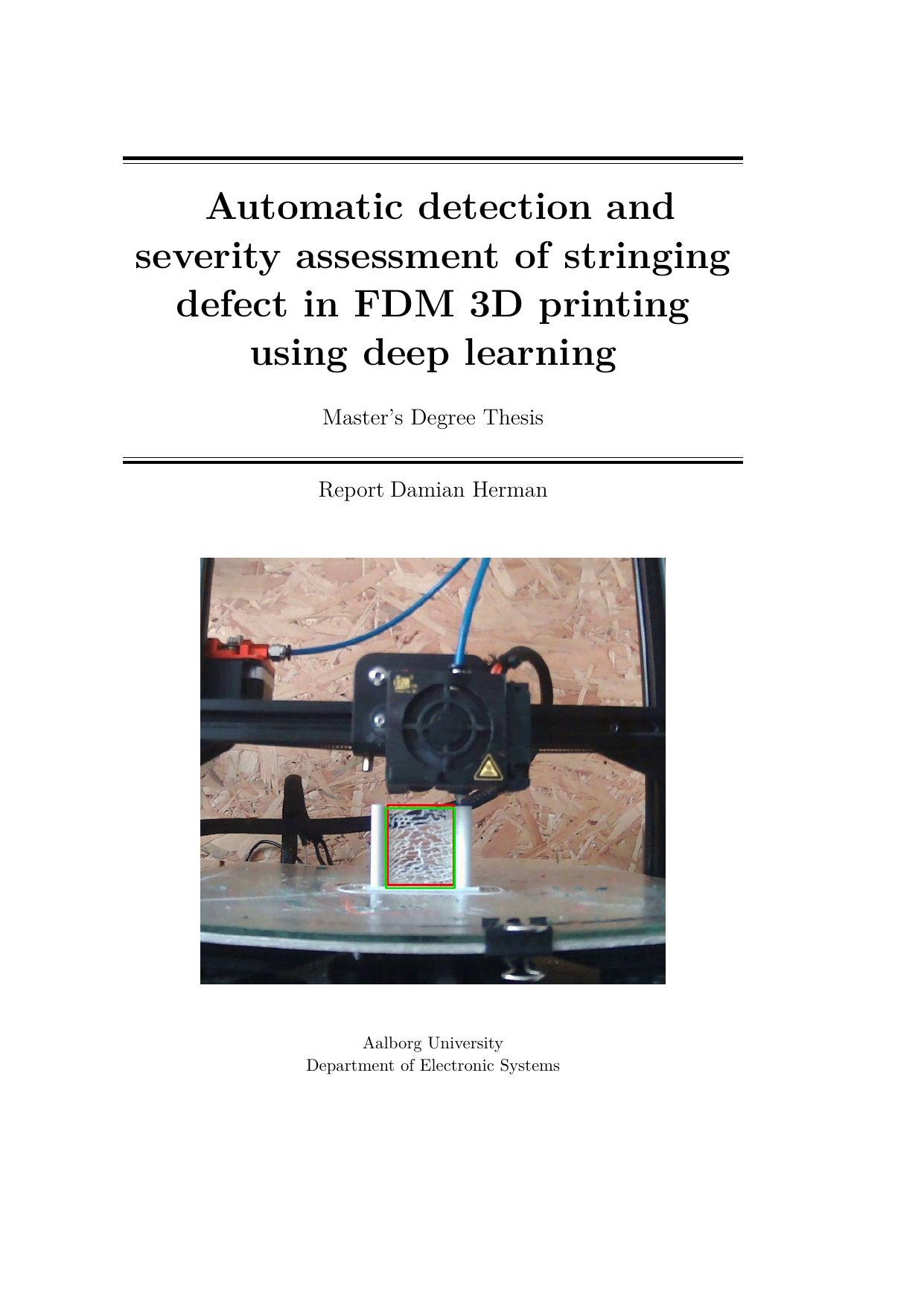

This thesis examines defects that occur during Additive Manufacturing (3D printing), focusing on stringing—thin strands of material that form between printed parts. It proposes a computer vision approach based on convolutional neural networks (CNNs) to automatically detect stringing and estimate its severity. The pipeline combines YOLOv8 to locate stringing (object detection) and MobileNetV3 to assess severity. A labeled object-detection dataset with severity levels was created. The models were tested on the dataset's test split and on real webcam footage of the printing process to evaluate whether the solution can support in-process supervision and severity assessment. To judge viability, standard performance metrics were computed and resource usage was measured to ensure the system would not disrupt production. On the test data, the detection model performs well; however, it does not generalize to the real-life footage, and the severity assessment model is not accurate enough.

Afhandlingen undersøger fejl, der opstår under additiv fremstilling (3D-print), med fokus på stringing – tynde tråde af materiale, der dannes mellem printede dele. Den foreslår en billedgenkendelsesløsning baseret på konvolutionelle neurale netværk (CNN) til automatisk at opdage stringing og vurdere, hvor alvorlig den er. Løsningen kombinerer YOLOv8 til at finde og afgrænse stringing (objektdetektion) og MobileNetV3 til at vurdere alvorligheden. Et datasæt til objektdetektion med mærkater for alvorlighed blev opbygget. Modellerne blev testet både på datasættets testdel og på virkelige webcam-optagelser af printprocessen for at vurdere, om løsningen kan bruges til løbende overvågning og vurdering af fejlens alvor. For at bedømme anvendeligheden blev der beregnet præstationsmål og målt ressourceforbrug, så systemet ikke belaster produktionen. På testdata klarer detektionsmodellen sig godt, men den generaliserer ikke til de virkelige optagelser, og modellen til alvorlighedsvurdering er ikke præcis nok.

[This apstract has been rewritten with the help of AI based on the project's original abstract]

Keywords