iVAE-GAN: Identifiable VAE-GAN Models for Latent Representation Learning

Authors

Derosche, Kristoffer Calundan ; Dideriksen, Bjørn Uttrup

Term

4. term

Education

Publication year

2021

Abstract

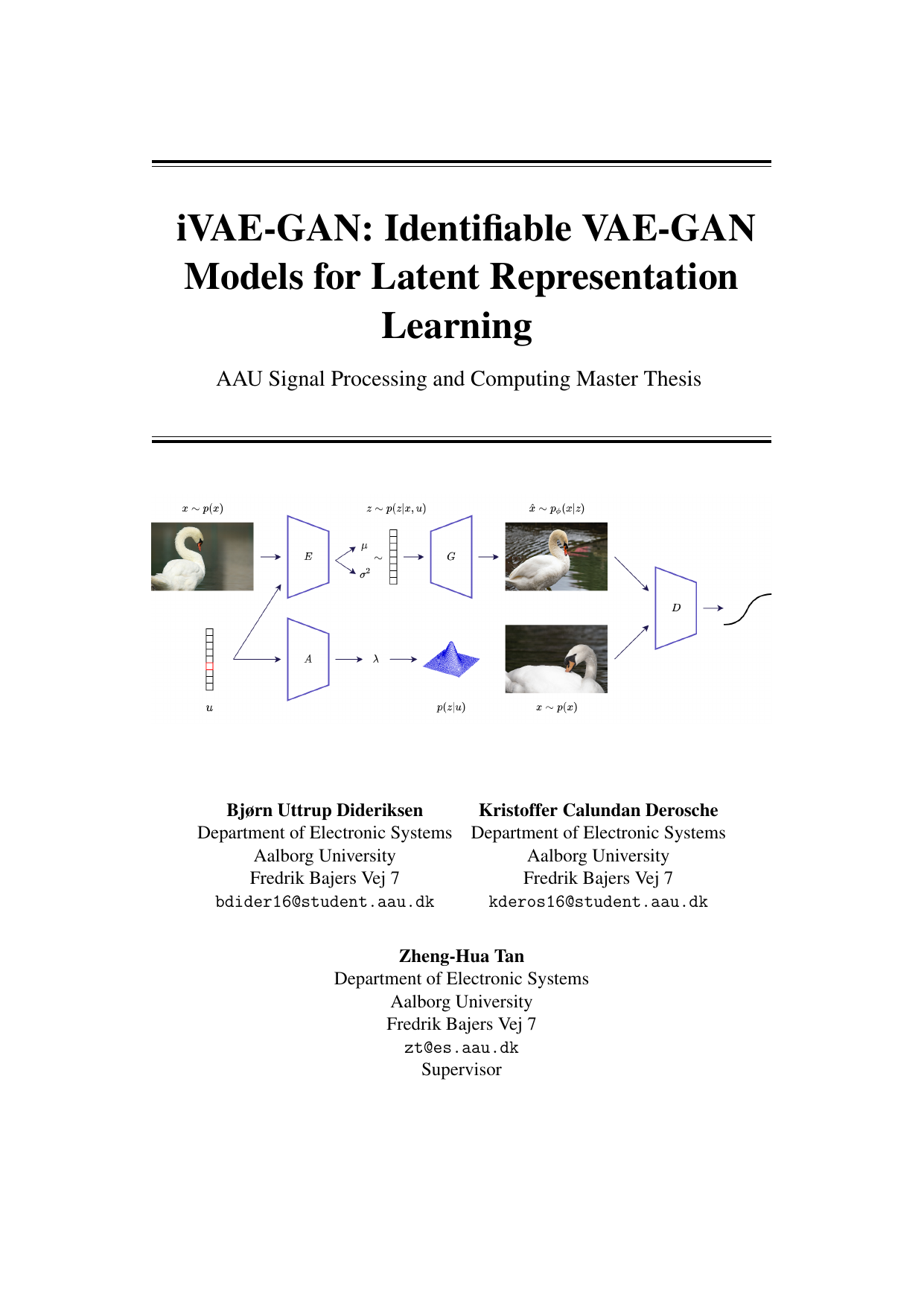

Recent advances in nonlinear Independent Component Analysis (ICA) and identifiable deep latent-variable models show when hidden factors in data—latent variables—can be recovered from raw observations without labels. In particular, identifiability theory tells us that the true latent variables can be recovered up to a simple linear transformation (such as rotations or scalings) using unsupervised deep learning. This matters because learning representations that truly reflect underlying factors is a central goal of unsupervised learning. By contrast, many popular representation-learning methods are heuristic and provide no analytical link to the true latent variables. This thesis extends the family of identifiable models by introducing iVAE-GAN, an identifiable Generative Adversarial Network that uses variational inference. A GAN trains two networks in opposition (a generator and a discriminator), while variational inference is a way to approximate complex probability distributions. With iVAE-GAN, we present what we argue is the first principled adversarial-training route to a theoretically meaningful latent space. We design and implement the iVAE-GAN architecture, prove its identifiability, and support the theory with experiments. Because GANs are among the most powerful deep generative models, adding a GAN objective to identifiable models is an important step. We hope this work encourages further designs of meaningful latent spaces built on theory rather than solely on heuristics.

Nye fremskridt inden for ikke-lineær Independent Component Analysis (ICA) og identificerbare dybe latent-variabelmodeller viser, hvornår skjulte faktorer i data—latente variable—kan genskabes fra rå observationer uden labels. Især siger identificerbarhedsteori, at de sande latente variable kan genskabes op til en simpel lineær transformation (fx rotationer eller skaleringer) ved brug af usuperviseret dyb læring. Det er vigtigt, fordi målet er at lære repræsentationer, der afspejler de underliggende faktorer. Til gengæld er mange udbredte metoder til repræsentationslæring heuristiske og giver ingen analytisk kobling til de sande latente variable. Denne afhandling udvider familien af identificerbare modeller med iVAE-GAN, en identificerbar Generative Adversarial Network, der bruger variational inferens. En GAN træner to netværk mod hinanden (en generator og en diskriminator), mens variational inferens er en metode til at approksimere komplekse sandsynlighedsfordelinger. Med iVAE-GAN præsenterer vi, hvad vi vurderer som den første principfaste adversarielle tilgang til et teoretisk meningsfuldt latent rum. Vi designer og implementerer iVAE-GAN-arkitekturen, beviser dens identificerbarhed og underbygger teorien med eksperimenter. Da GAN-målformuleringen er central i nogle af de stærkeste generative modeller, er en identificerbar GAN et vigtigt bidrag. Vi håber, at arbejdet inspirerer flere konstruktioner af meningsfulde latente rum med teoretiske garantier frem for alene heuristik.

[This apstract has been rewritten with the help of AI based on the project's original abstract]